How to write good AI prompts as a PM?

Product manager’s guide to crafting effective AI prompts

Naman Arora

November 12, 2025

I joined an SF-based startup in the summer of 2023 as part of my internship. This was my first time working as a product manager, for context – I used to work as a software engineer before this, and I have around 4 years of development experience. When I decided to pursue an MBA in late 2022, ChatGPT and other AI tools were just in their beginning phases. Everyone knew that they could help in writing emails but nothing beyond that. Things actually took off from 2023 onwards when GPT-4 got announced and the AI evals race began.

Here I was responsible for creating a mental health chatbot. I won't go into the technical details of how it worked, but to keep things simple, you can assume that you provide a prompt to the chatbot which defines how it will behave in different situations. If your prompt is weak, things can go awry way too quickly. The chatbot can start making up random facts, deviate from the topic and start discussing Taylor Swift's new album with you, or start telling you 2+2=6… You see the pattern. So having strong prompts in place is a must. Back then I had zero context on what prompting is, so like any other person, I did a course on prompt engineering to give myself a head start, and the rest of the things I learnt on the job.

Here are some of the prompting techniques I learnt during my 2 years working as an AI product manager. These AI prompt tips are applicable as of Nov 2025:

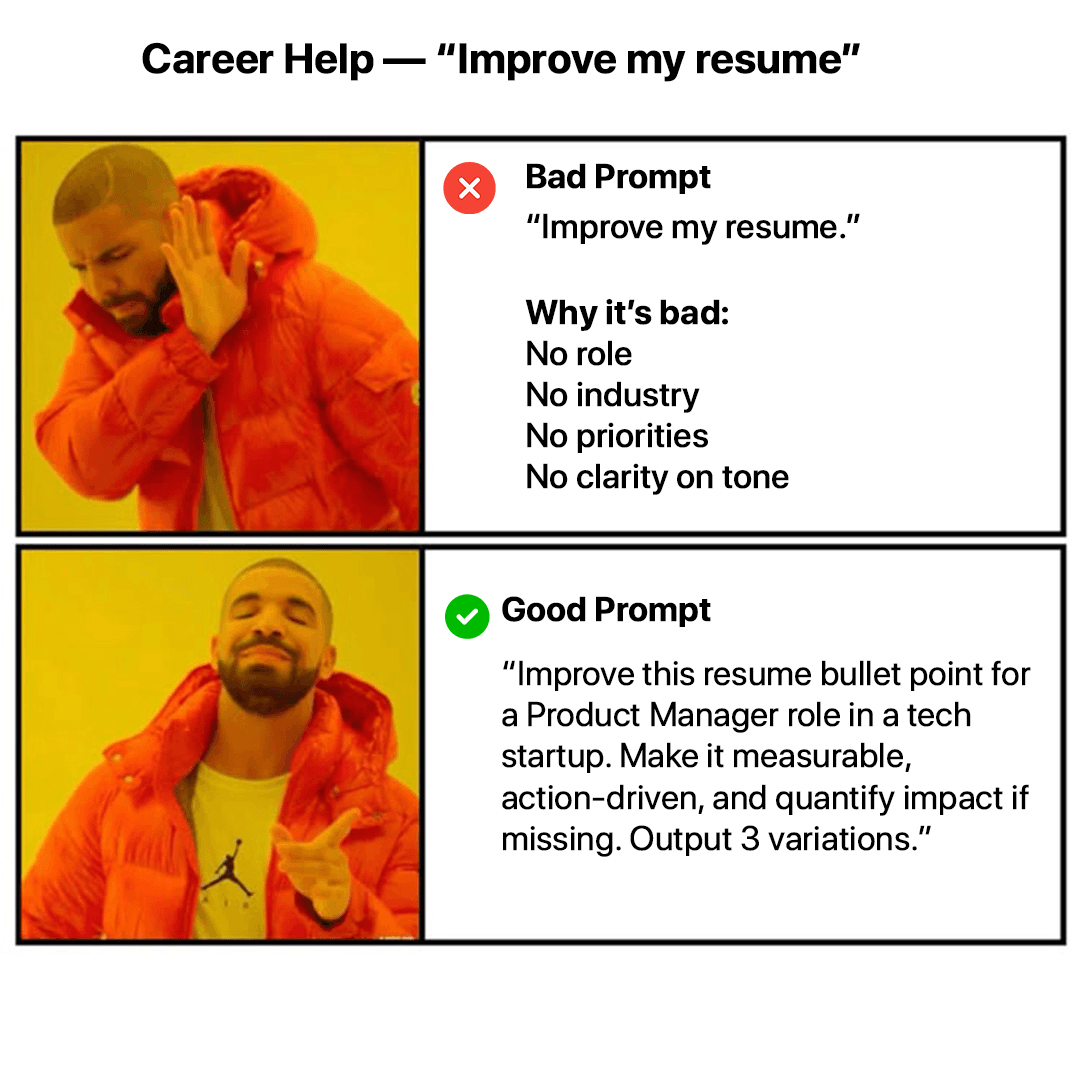

Career Help - "improve my resume"

1. Prompt it like a baby – it may sound stupid, but if you want accurate results from LLMs like ChatGPT, Claude, Gemini, etc., you need to prompt them like a baby. Here’s an example of how to give a good AI prompt:

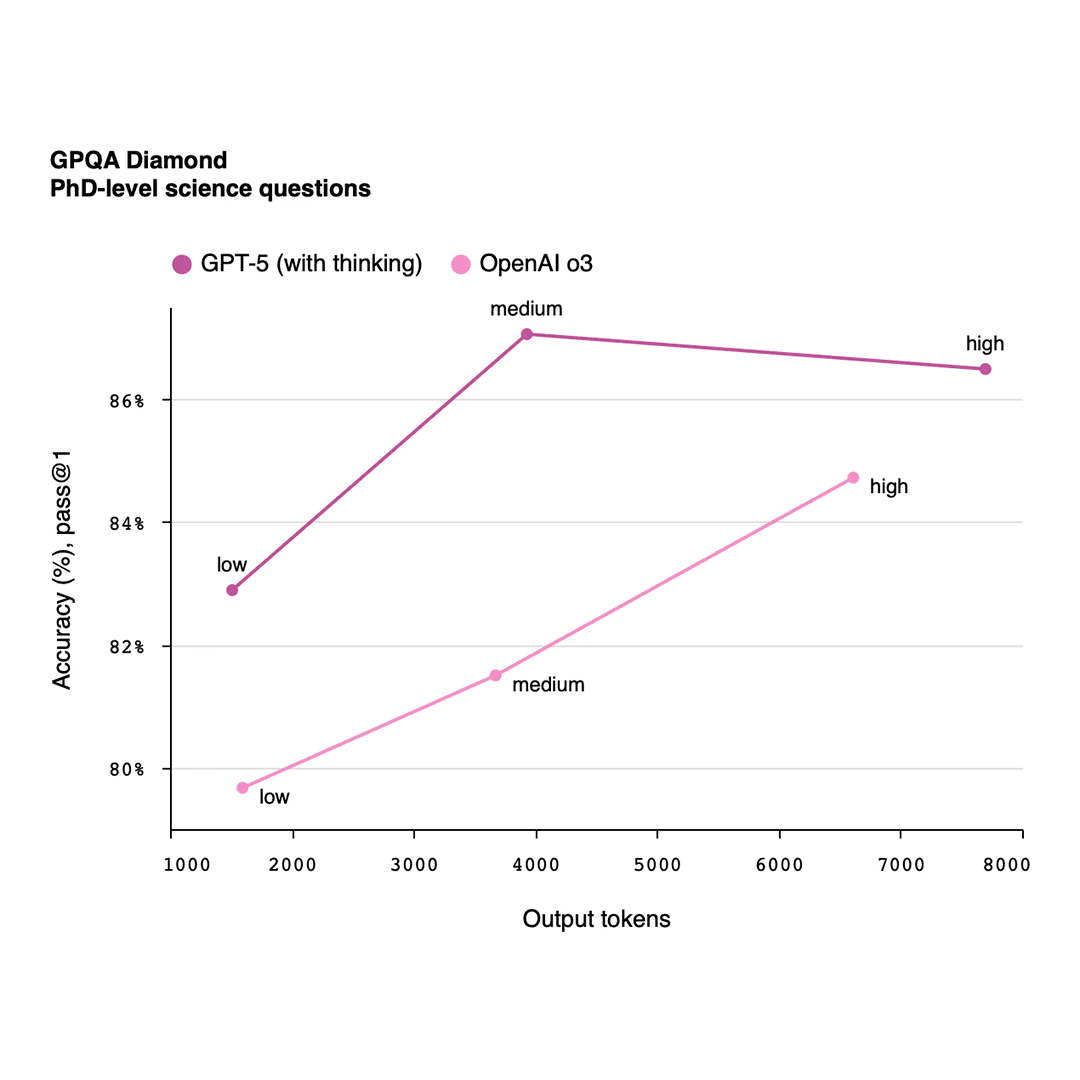

Graph

2. Make it Think – well, LLMs technically can’t think, but these models have a special trigger built in which gets triggered when you mention words like “think”, “think hard” and “ultrathink”. When you mention these words, LLMs take extra time to come up with the right solution. If your LLM is not giving you quality results or is acting unpredictably.

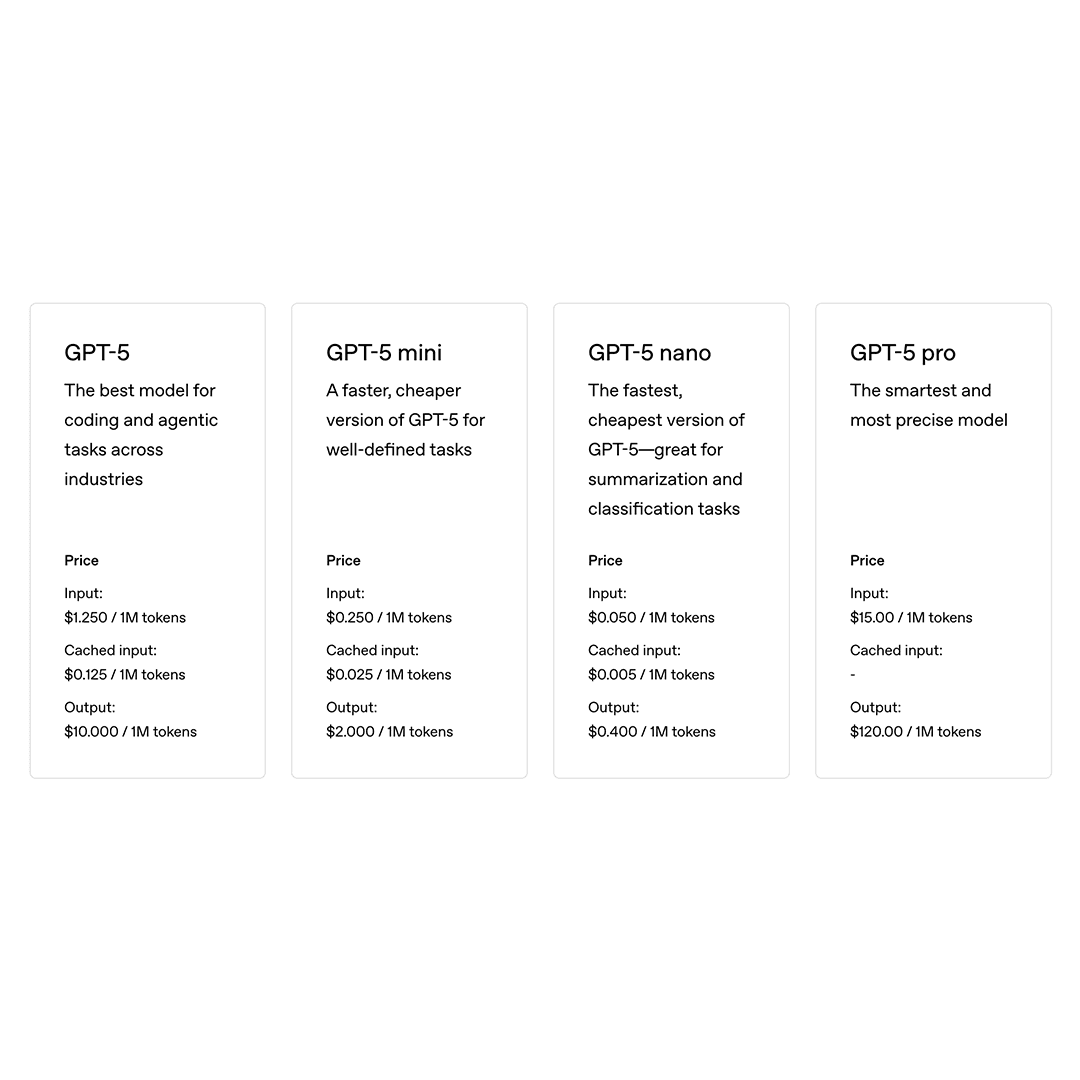

Outputs are costly

3. Outputs are costly – if you work with LLMs via an API, then a basic rule of thumb that you should know is that outputs are costlier than inputs. What this means is that giving a huge prompt of 100 lines to the LLM is fine as long as in the output you expect just a few sentences from the LLM. The input token cost is much less than the output token cost for all LLMs. This is something to keep in mind when you’re on a budget. You can simply add sentences like “Give your output in 200 words” or something like “Keep the answer brief”. Best AI prompts keep the output limited for their use case.

You can never guarantee their behavior

4. You can never guarantee their behavior – One time I was working on a project where I had to add the ability to make the chatbot multilingual. You may think, “What’s the big deal in that? You can simply add the line ‘Reply back in Chinese’, and the LLM will talk in that language.” Well, you are kind of correct. This is what my prompt looked like, but still it spoke in English. Why? Because the instructions were given to the prompt in English! SO while interpreting the prompt, the LLM used to get confused sometimes about whether to talk in English or Chinese. We fixed this by keeping the entire prompt in Chinese.

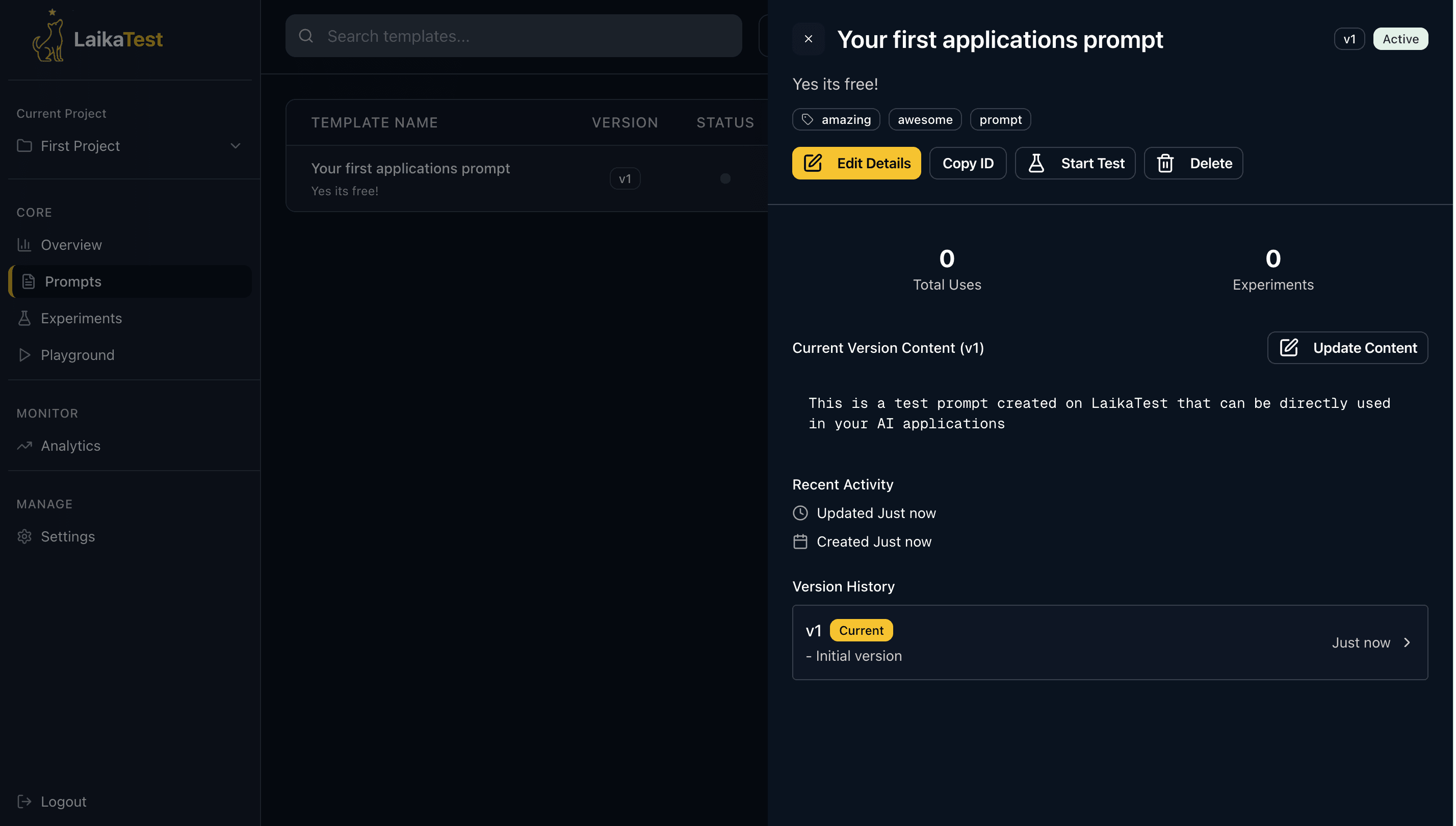

Use prompt versioning from day 0

5. Use prompt versioning from day 0 – One thing that we were super late in adopting was the practice of prompt versioning. In simple words, it lets you manage all your prompts in a single place, and it maintains a history of changes made to your prompt. I had a small team, but everyone was maintaining their prompts in Notepad, or they were hardcoding them in in the app/software. Once we started to use prompt versioning tools, this simplified our lives a lot. No more wondering who updated the prompt and why. The entire context gets captured in the tool. Langfuse and LangSmith are popular alternatives. If you want something free and easy to understand with unlimited storage, you can check out Prompt Management in LaikaTest.

6. Provide it a good backstory – A huge problem with LLMs is that they are designed to work in a question and answer format. You ask the LLM something, and it will answer you back. There are no follow-up questions. Due to this reason, conversations seem very artificial. When people used to use our chatbot, they often complained that the conversations were very one-way or they were not engaging enough. To fix this problem, we tried adding backstory to our bot. If you ever want to form a strong bond with another person, try to mimic their behavior, and that is what we told it to do.

7. Ignore suggestions for fine-tuning – Lastly, since this domain is new and advancing at a rapid rate. Many people would come to you suggesting that you should fine-tune your model or use AI agents for some use case. 90% of the time, you won’t need that. Prompt engineering will yield you good enough results in most of the scenarios. Use fine-tuning only when you want to teach your model something new. If something already exists on the internet, chances are, your model already knows about it. So do not go into the rabbit hole of fine-tuning.

Now you know some of the most common traps that any new AI product manager or software engineer can fall into. Prompt engineering is an art which no course can teach you. How to write a good AI prompts is all about experimentation to see what works and what does not work. With every new model release, models become smarter and smarter, so you don’t have to dumb down the prompts that much anymore.

For a limited time, we are offering LaikaTest Pro for zero cost. We are building a next-gen experimentation platform for AI. With each new feature, we are trying to bring in predictability in LLMs, which are inherently unpredictable.

Tags

#Product Management#AI Prompt Engineering#A/B testing