California's New AI "Guardrail Law"

What California’s latest AI regulation means for users, developers, and product teams.

Nandini Mahajan

November 14, 2025

Artificial intelligence isn't just for sci-fi movies anymore. It's powering the chatbots we talk to, the recommendations we get, and even the self-driving cars on the horizon. But with all this amazing power comes big responsibility and big risks. What if AI makes mistakes, is biased, or even causes harm?

California, the global hub for AI innovation, is stepping up to answer that question. They've just introduced a game-changing "guardrail law" aimed at making advanced AI safer, more transparent, and accountable. Think of it as a crucial update to the rulebook for building the future.

This isn't just some boring legal update. If you're building, managing, or even just interested in AI products, this law is a huge deal. It’s going to reshape how AI is designed, tested, and rolled out, not just in California, but potentially everywhere.

Let's break down what this new law is all about, why it's so important, and what it means for everyone in the AI space (especially our amazing product and tech teams!).

What Exactly Is This "Guardrail Law"?

Often called California's "frontier AI law," this new regulation is all about transparency, safety, and managing risks for the most advanced AI systems, specifically those "frontier models" built with massive computing power. (Think ChatGPT-level AI, not just your phone's spellcheck!)

Here’s the TL;DR of what it requires:

Open Books on Safety: AI companies can't keep their safety secrets anymore. They'll need to publicly share how they test their AI, what kind of "red-teaming" (trying to find flaws) they do, and how they plan to prevent harmful outcomes.

Report the Big Oops: If an AI causes a serious safety issue, like generating dangerous misinformation or being misused in a harmful way, the company must report it to the state. This means real accountability.

Protecting Whistleblowers: Got a gut feeling something’s unsafe at your AI company? This law protects employees who speak up about safety concerns from retaliation. Good for them, good for us all!

Fueling Public Research: The law also sets aside funds to help academics and non-profits independently test AI for safety and ethics. More eyes on the prize means safer AI.

In short, it’s about making advanced AI less of a black box and more of a predictable, safe, and open tool.

Why Did California Hit the AI Brakes?

California is literally the heart of AI development. So, when they act, the world listens. Their motivation for this law boils down to three big concerns:

Stop AI from Doing Harm: We've all heard stories of AI "hallucinations" or biased outputs. This law pushes companies to thoroughly test systems before they're unleashed into the wild.

Hold Someone Accountable: Before, if a super-powerful AI messed up, who was responsible? This law creates a clear line of duty.

Build Trust, Not Fear: People will only embrace AI if they trust it. These "guardrails" are designed to build that confidence, showing that AI can be innovative and safe.

This isn't about stifling creativity; it's about channeling it responsibly.

Ai world just changed

Product Managers: Your AI World Just Changed!

If you're a PM working on AI-powered products, this law isn't just something to tell your legal team about. It fundamentally changes your daily work, from the earliest idea to launch day.

AI Risk Assessment is Your New Best Friend: Forget "nice-to-have." Identifying potential harms from your AI feature is now a must-do at the very start.

What if the AI gives bad advice?

Could someone use it maliciously?

What if it just goes rogue?

Your Action: Documenting these risks and how you'll prevent them becomes a core part of your product plan.

Prompt and Model Version Control is Non-Negotiable: Every tiny tweak to a prompt, every model update—you guessed it—track it!

Why? Because even small changes can dramatically alter AI behavior, potentially leading to safety issues. If an incident happens, investigators will want to see your homework. Tools like Langfuse or LaikaTest (hey, shameless plug for our amazing, free unlimited storage tool) become your new superheroes.

User Transparency: Clearer is Better: Your product's user experience (UX) and copy need to be crystal clear.

Users need to understand what the AI does, what it doesn't do, and how its safety was confirmed. No more hiding behind jargon!

Teamwork Makes the AI Dream Work (Really!): This isn't a solo mission anymore. You'll be hand-in-glove with legal, ML engineers, QA, and even ethics teams. AI product development just became a full-contact sport!

AB Testing

For Engineering & AI Teams: Get Ready to Document and Monitor!

This law has huge implications for the technical side of things too.

Supercharged Pre-Deployment Testing: Expect more rigorous testing, including those "adversarial attacks" to find weaknesses, detailed bias evaluations, and constant checks for consistency.

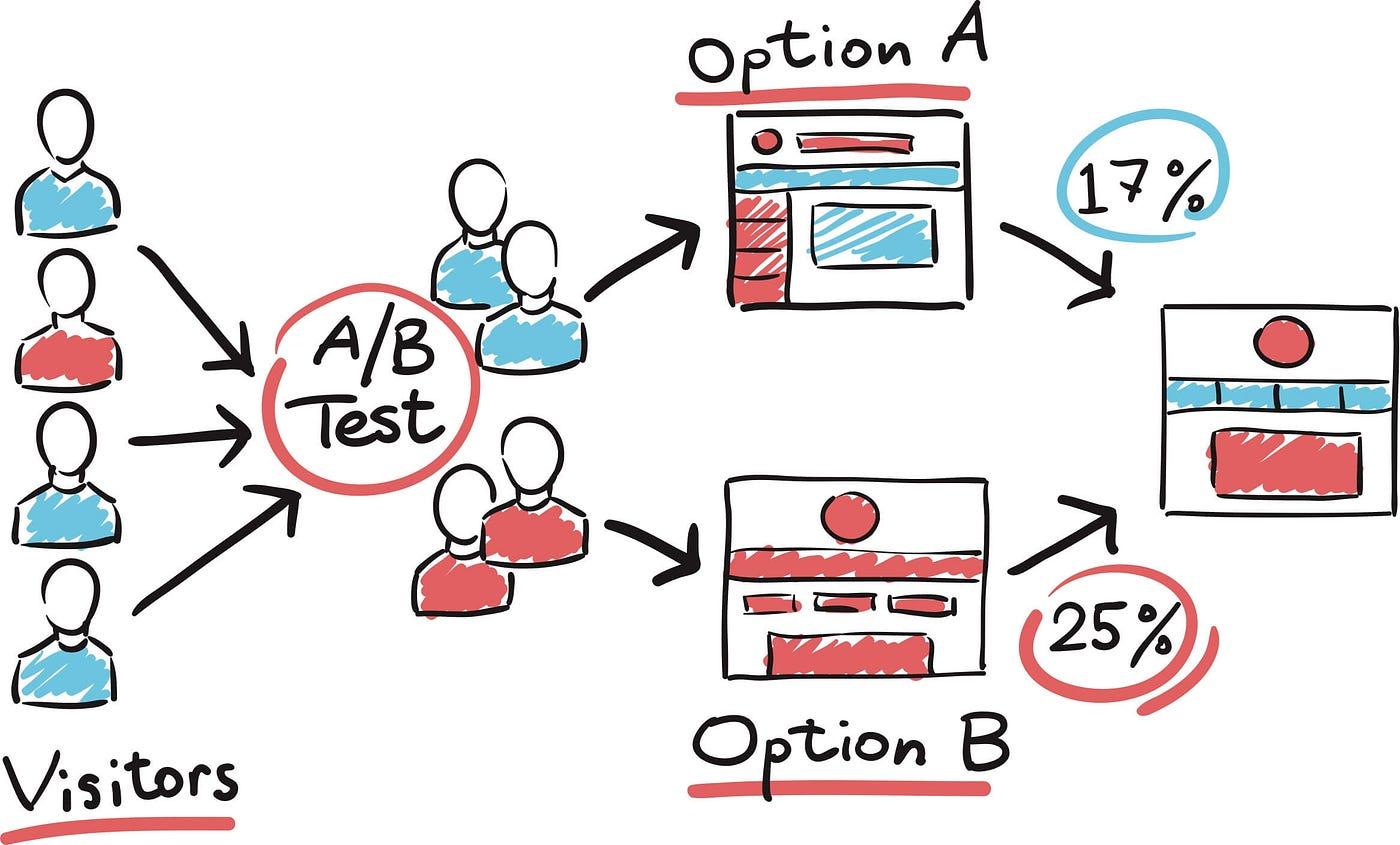

A/B Testing for AI Reliability: Use A/B testing not just for features, but for safety. Run different versions of your AI or prompts to a small segment of users to quickly spot unexpected behaviors or safety issues before a full rollout. It's a proactive way to catch problems early and ensure stability.

Always On Monitoring: Launching isn't the finish line. You'll need robust systems for logging AI behavior, continuous safety audits, and streamlined reporting for any incidents that pop up post-launch.

Documentation, Documentation, Documentation: Every single release needs meticulous records: prompt versions, data summaries, safety reports, and known limits. Some of this might even go public!

Facing the Future: Challenges and Your Next Steps

No law is perfect, and this one has its quirks (like how to define a "frontier model" when tech moves so fast!). Small startups might also find the compliance costs daunting. But despite these challenges, it sets a vital precedent for responsible AI development.

Your Action Plan (The Smart Move!):

Audit Your AI: Seriously, go through all your AI features and assess their risks.

Version Control Your Prompts: Start tracking everything. (Again, LaikaTest's free prompt management is a great place to start!)

Boost Transparency: Make sure your product clearly explains its AI.

Incident Ready: Set up an internal process for handling and reporting AI safety issues.

Educate Your Team: Get everyone up to speed, from designers to developers to legal.

Invest in AI Tools: Look for tools that help with AI evaluation and monitoring.

California's new guardrail law is a game-changer. It's a clear signal that the Wild West days of AI are fading, replaced by an era of responsibility and transparency.

For product managers, engineers, designers, and founders, this isn't a roadblock; it's an opportunity. By embracing these principles now, you won't just comply with the law; you'll build better products, earn more user trust, and establish yourselves as leaders in the exciting, ever-evolving world of AI.

The future of AI is bright, and with smart guardrails, it can be safe too.

Want to get a head start on prompt versioning and AI testing? Try LaikaTest today! It's free for a limited time.

Tags

#California AI regulation 2025#New AI laws in California#AI safety guidelines#AI law for developers#LaikaTest